Built desks that procedurally add props around themselves when they’re placed within a scene, enabling a variety of content if a bunch of them are placed together.

Continue readingTag Archives: City

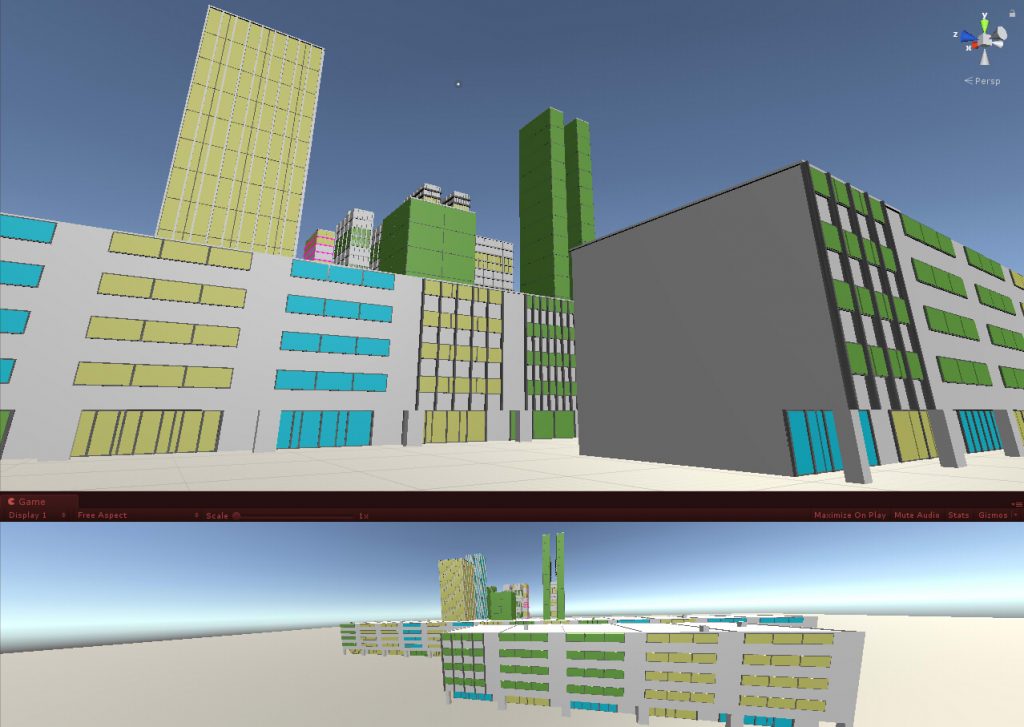

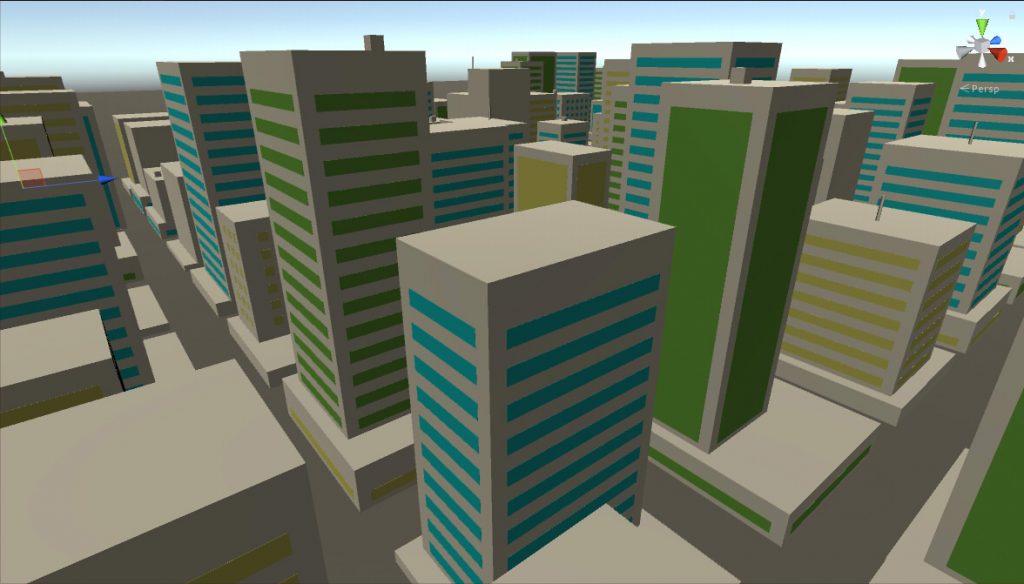

Building a Procedural City Generator with SNAPS, gradual buildup.

Updated the ProcGen City Builder to build up the SNAPS parts gradually. Also tested the ability to group prefabs of furniture within a room of the building. Because if I can do one, I can certainly do more.

Building a procedural city generator in Unity with SNAPS

Took a break from this project between September 2018 and July 2019; had switched jobs and had to spend time adapting to being a Producer again. However, in mid-August of 2019, Unity released a set of prototyping assets called SNAPS. Was surprised to discover that the height measurement for SNAPS was precisely the one I used, which implied adapting the SNAPS prototyping assets for use in my Procedural City Generator would be doable.

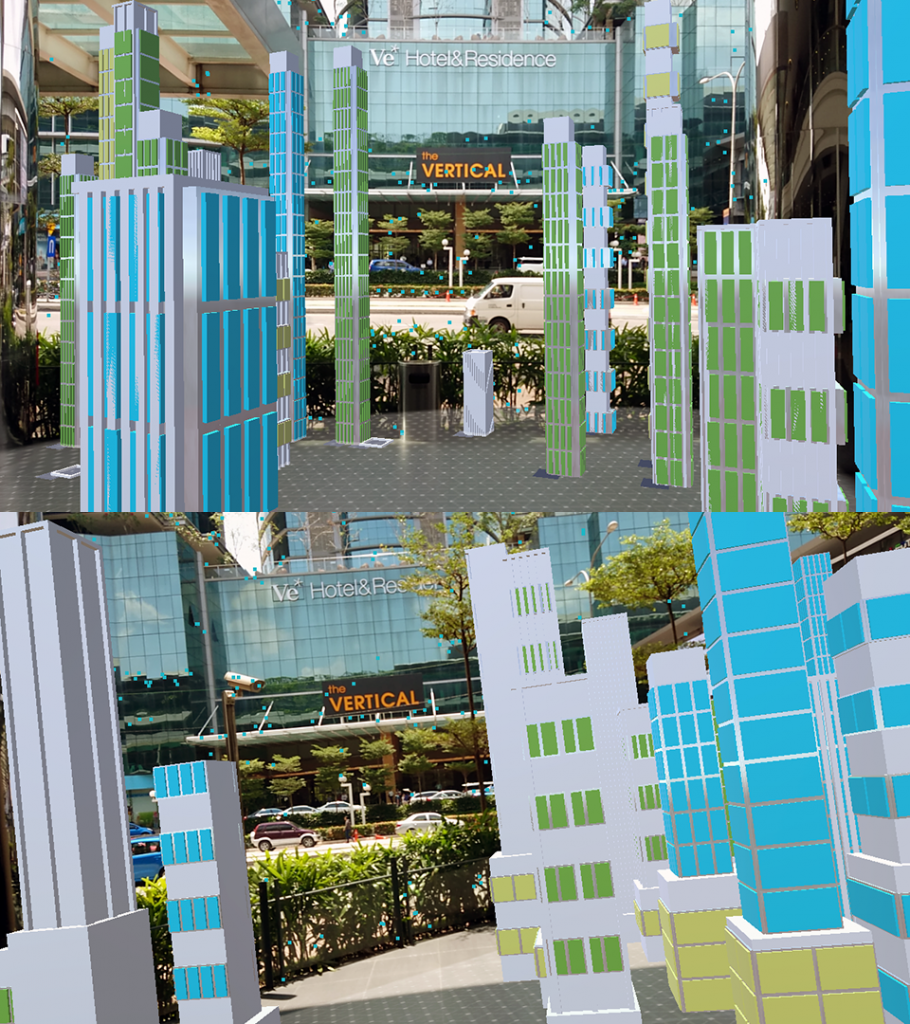

Building a procedural city generator in Unity – AR version!

So far, the buildings generated were accurately scaled. On August 2018 I started experimenting with AR, which I discovered that a real-size skyscraper in AR isn’t that easy to view on a phone! So I added a Minimize mode, where the buildings could be scaled down to be viewable on an AR-detected floor.

The implementation is admittedly a hack, and since some parts of the code couldn’t figure out what to do with the new scale, issues with z-fighting and object placement appeared. But on the whole, it’s a version I can show to people on my phone, so I just need to clean up my code.

Building a procedural city generator in Unity

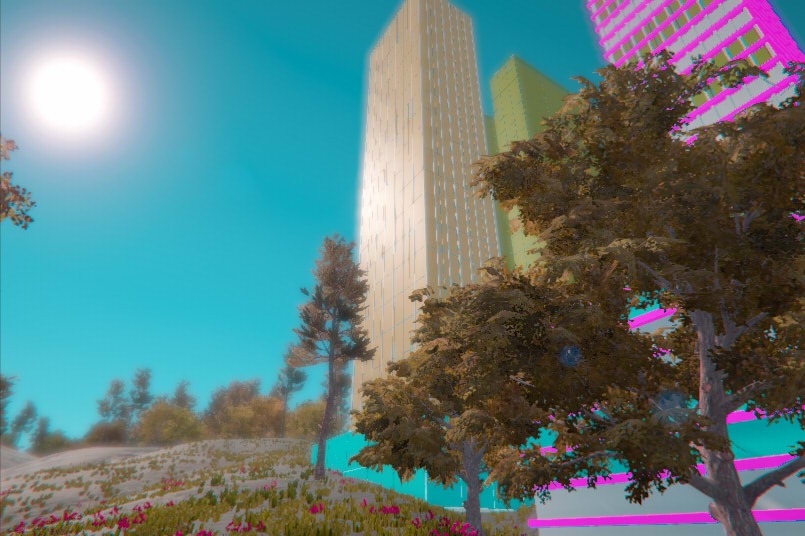

Since I was able to generate a building on any physics-enhanced surface in Unity, I can add the code into any scene, click-and-drag on a physics enabled surface and generate a building on it. Thus, when I got Gaia around the end of June, I was able to generate procedural buildings within a generated landscape scene.

Added a Post-Processing Stack effect for fun.

Building a procedural city generator in Unity

On June, I experimented with generating different types of buildings. The new building type is a South East Asian shophouse that is commonly seen in Malaysia and Singapore; the row of shops chained in a single long building that has 2-5 floors on top with a shaded 5-footway or “kaki lima” on the ground floor front.

Building a procedural city generator in Unity

Around April, I was trying out one of Brackey’s tutorials where he demonstrated a Raycasting code to direct AI units. I modified the code to be able to create a selected area by mouse, and to generate a building out of that area.

Building a procedural city generator in Unity

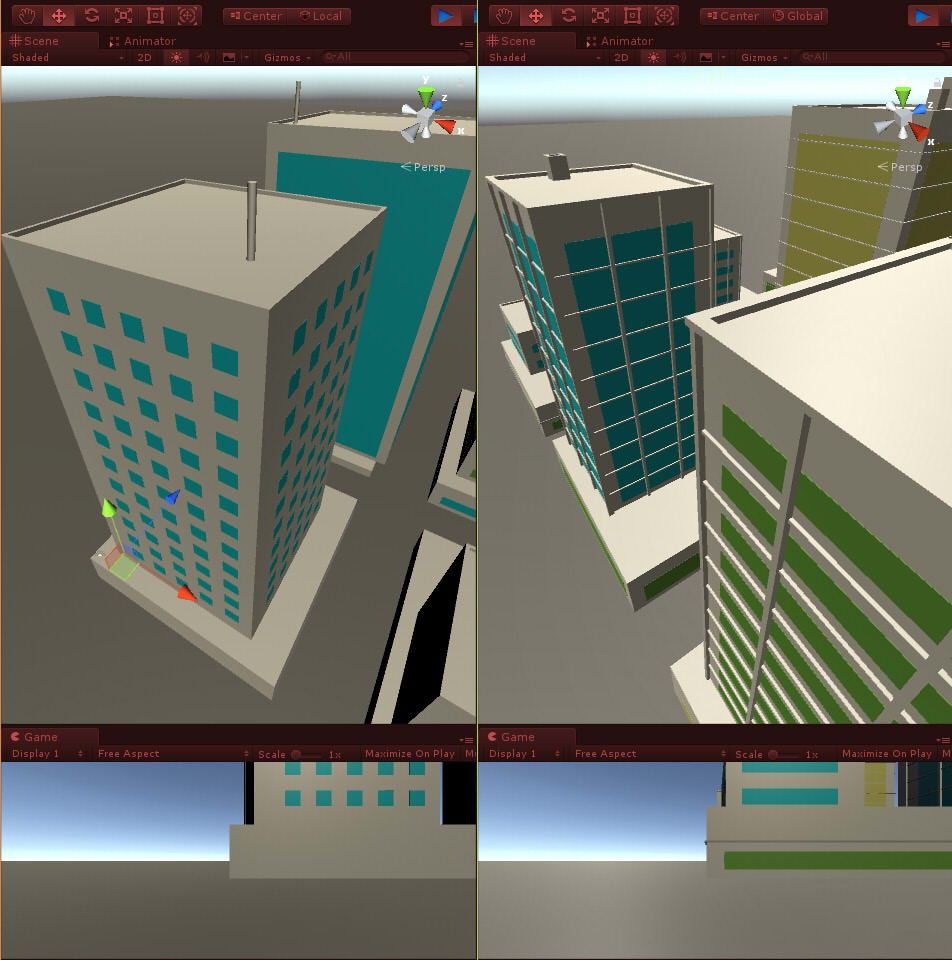

The next version on January 19th, 2018 added rules to window generation, and applied the edge algorithm around the windows. With materials assigned to the generated Gameobjects, the generated buildings became more attractive to the eye.

Building a procedural city generator in Unity

On January 12th, 2018 I added the ability to generate finer detail on the rooftop and grid-like facades on the building walls. The added edges on top of the building created a more believable look, and I was able to adapt the code to create randomized grid patterns on the walls.

The code still isn’t perfect; top building on the left has a definite overhang. Part of the work was figuring out where the rules failed in generating believable content and correcting as I go along.

Building a procedural city generator in Unity

The next version on January 8th 2018 refined the arrangement of blocks to make the layout more realistic (e.g. adding believable corners so that the windows seem feasible), and added a ground section to the buildings. The ground section allowed me to define the area the building would occupy, and randomize the scale of the upper section of the buildings. The windows are now thin slices instead of blocks, thus they can be arranged within a defined wall region.